Improve performance with Http caching

06 May 2019

Performance is increasingly important for web application. As the bulk of users for many websites come from mobile devices these days, web developers have to pay extra attention to the performance of their application to provide an acceptable experience for users visiting their site through mobile networks. There are a great number of tips and tricks to improve end user performance, but the single most important one is proper caching. Caching can prevent parts of your application from being downloaded over the network altogether, eliminating any possible networks issues. Setting up caching the right way can be difficult though. The following explanations and tips can help getting a better understanding of caching and implementing a proper caching solution.

What is Http caching

The definition of caching is storing something for later use. For your average squirrel this translates to storing acorns in a convenient location to retrieve them quickly when hunger sets in during the winter. For a web application this would translate to storing some data or resources in a convenient location from which they can later be retrieved quickly and easily. When something is cached, a copy of the original will be stored in a separate datastore, called a cache store of simply a cache. Caching in general can be done anywhere for any kind of data but Http caching focusses on the caching of Http responses.

Http caching deals with caching responses in a client-server setting. It is mostly used for Html websites but can be applied to any kind of Http traffic. Http caching allows for responses from a server to be cached on the client/browser or on any cache between the client and the server. Servers can put instructions in their responses to instruct caches where, how and how long they may cache a response. Because those responses will be cached on the client or between the client and the server, a significant amount of network traffic can be eliminated. The challenge of Http caching, or any caching for that matter, is how long to keep something in the cache. Any request resulting in a response being served from a cache will never reach the server for as long as the response is in the cache. This means that during this time the server has no control over this request and that can make it difficult to get an update to the client. But the benefits of reducing the number of requests reaching your server usually outweigh the possible difficulties involved with implementing Http caching.

Why use Http caching

For better performance

The most common reason to use caching is performance. Every action in your application that consumes an above average amount of time can be a candidate for caching. The following resources are good candidates for caching:

- A resource that takes a long time to render on the server-side

- A resource that is requested very often over the network

- A resource that takes a long time to download over the network

By caching the results of a heavy rendering function or the response of a slow network request you can significantly increase the overall performance of your application.

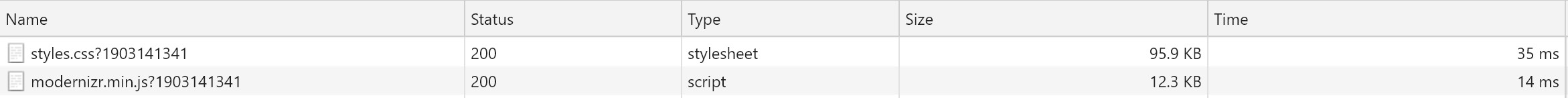

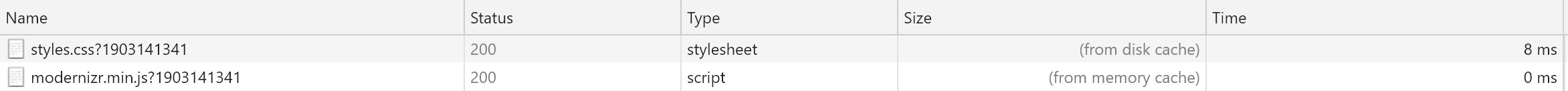

Requests without caching

Requests with caching

Keep in mind though that everything you put into your cache will have to be fetched from its original source once in a while. So make sure your applications performance is also acceptable when the resource is fetched from its original source. Caching should give your application a performance boost, it should not be used in such a way that your applications doesn't work without caching.

For higher availability

There is an other reason to use caching, one which is often overlooked. Caching can play a major roll in preventing applications from becoming unavailable in the event of heavy loads. Imagine an application which heavily depends on data from a database but does not use any caching. In such an application all requests to the application would directly result in a database query. In the event of an unexpected increase in load the database can become unresponsive making the application unavailable. If other applications use the same database they can become unavailable too. If the application would cache the result of the database queries in memory for even a single minute, there would only be one database query a minute no matter how many requests the application receives.

How does Http caching work

Cache locations

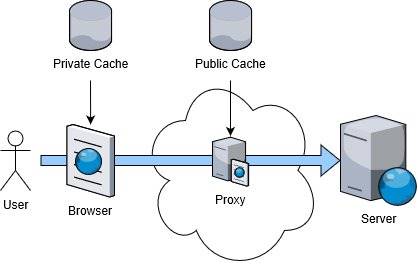

Responses can be cached in 2 locations in de Http request-response pipeline:

- private : in the browser of the user

- public : on any number of servers where the request passes through (like proxy servers or cache servers)

When a response is stored in one of these cache locations it can be served from the cache at that location instead of from its original remote location. Depending on where the response is cached you can eliminate (part of) the network traffic for requesting and retrieving the resource. The closer to the user the cache location is the more network traffic is eliminated. Browser (or private) caching is of course the closest to the user you can get so on first glance it is your best option for reducing network traffic and latency. The only caveat is that browser caching only caches responses for a single user, when the next user comes along he or she will have to retrieve everything from your server first before it can be cached in his or her browser. This is where public caches come into play. By caching the response on a server (like a proxy server) you can cache something for all users who's request passes through this server.

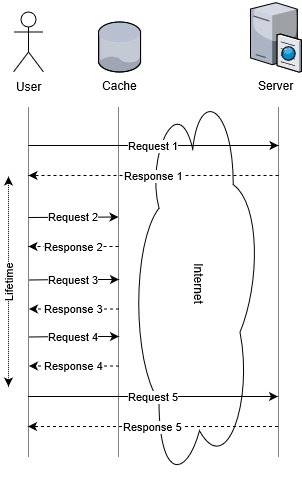

Lifetime

By serving a cached version of a response you run the risk that a change to the original resource behind the response won't be reflected in the cached version. As caches can store a response indefinitely you will have to tell the cache how long it may store the response. The time during which a response should be stored in a cache is called lifetime. A cached entry is called fresh when it is requested within the requested lifetime. After the lifetime expires, the cache is said to have gone stale. A cache may remove stale items from its store. Because of this uncertainty, clients will have to validate if a cached response has gone stale.

Validation

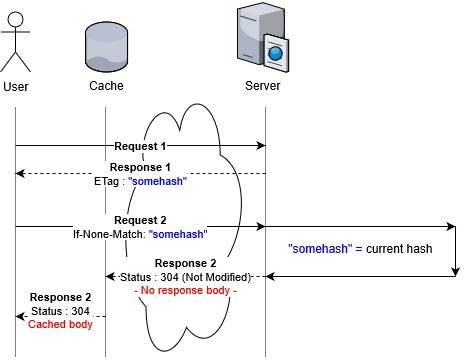

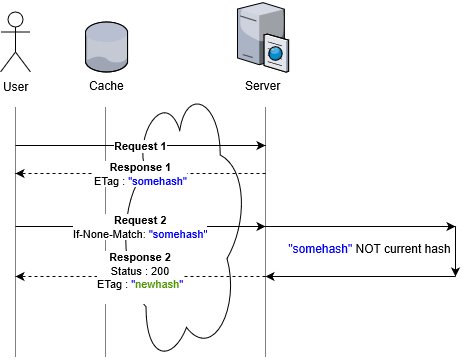

Normally any client doing a request won't have any way of knowing if the response it receives from a cache has gone stale as there is no communication between the cache and the origin server. To allow clients to check if a newer version of a cached response is available, Http caching has a mechanism call revalidation. Revalidation allows clients to ask origin server if a newer version of a cached response is available. If the server has a newer version available it should return the updated response, this new response can then be cached again by a cache. If however the response has not changed, the server should return a response with just headers and no content. Http caching usually revalidates a cached response after it is expired, but you can also explicitly ask a cache to revalidate a cached response for every request.

Validation can be done by comparing a hash of the cached resource with the hash of the resource on the server, this hash is called an ETag. The alternative is comparing the last modified date of the cached resource with the last modified date of the resource on the server.

Testing

Testing if your caching works as expected can simply be done in a browser. When clicking through your site you can inspect the responses in your browsers development tools to see what gets cached. Be sure to test in all major browser.

All browsers use caching when users click on links or use the browser's back button. Some browsers cache differently when refreshing the page (pressing F5 in Windows), usually a forced revalidation is triggered in this case. When a page is 'hard refreshed' (Ctrl-F5 in Windows) browsers should get fresh versions of all cached resources.

Most browsers allow users to disable caching altogether, either for the entire browser or just when development tools are active. Check these settings before testing a caching implementation.

How to use Http Caching

What changes do you have to make to your web application if you want it to use Http caching? The short answer is : nothing. Every cache store, whether it is a browser or a public cache like a proxy, may cache any resource it thinks is worth caching. So there is a big change that some of the resources of your website are being cached, even if you didn't specifically configure caching. So why worry about how to configure caching for your web application? If you let caches determine how they handle caching you run the risk that the wrong resources are cached or resources which are updated frequently will be cached for a long time.

So how do you tell caches what they should cache and how? Http caching can be controlled by adding caching headers to the responses, these headers are used by caches to determine how and if they should cache a certain resource.

Cache-control header

The main header with which to control caching is the aptly named cache-control header. If this header is included in a response, any cache should use the contents of the header to determine how, where and for how long to cache the response.

How long to cache

To instruct caches how long they should serve a cached version of a response you use the max-age directive to set the maximum number of seconds the response may be cached.

Cache-control : max-age=600

The max-age value instructs a cache for how many seconds the response may be served from the cache. For static files which are rarely updated you can set this value to a very high value. Many sites use a year (31536000 seconds) for such resources. For other resources, try to match the max-age value to the expected frequency the specific resource will change. For resources which are requested extremely often but also change frequently you can set the max-age to a very short duration (minutes to hours) just to prevent excessive load for your server.

Where to cache

Some responses should only be cached on a per-user basis. Things like Html pages which show content for a logged-in user should never be cached in a public cache, if this would happen all users can potentially be served a cached page meant for a specific user. If you want your resource to only be cached by private caches (browsers) you can add the private directive.

Cache-control : private

A related directive is the public directive. This instructs caches to allow the response to be cached in any cache store, both public and private. This directive will also instruct caches to cache the response if it would normally not be eligible for caching. Normally only responses with a 200 status code which are the result of a Get request and responses with a 404, 301 or 206 status code are cached.

Do not cache

Some resources you explicitly want not to be cached, this is done by adding the no-store directive.

Cache-control : no-store

Cache the response but always revalidate with the server if a newer version is available

Normally any resource is always served from the cache for the duration set by the max-age directive. If you explicitly want to contact the server every time before serving a cached response to check if an update of the cached response is available, you can use the no-cache directive. This means that there will be a request to your server every time the resource is requested. But if the resource on your server has not changed, the server will not return the resource but just an empty response with a 304 (Not modified) status code, telling the cache that the resource has not changed.

Cache-control : no-cache

ETag header

This response header returns a fingerprint or hash representing the content of the response. This can be any value but for static files usually a hash of the file content is used. The value of this header is used by the client to allow it to validate if anything has changed to the content of the response. The server should change this value if the resource is changed. Most webservers can automatically supply an ETag header for static files.

ETag : "680e251e17774e6485d0f36cdd3d29e2"

Last-Modified header

This response header tells clients when the resource has been changed. It is also used by the client to validate if anything has changed to the content of a resource. As with ETag, most webservers can automatically supply a Last-Modified header for static files. If both the ETag and the Last-Modified header are supplied, cached will ignore the Last-Modified header.

Last-Modified : Sun, 24 Mar 2019 12:28:00 GMT

Pragma header

Older caches using the HTTP 1.0 specifications still use this header, it has a single useful directive : no-cache. This works basically the same as the no-cache directive of the Cache-control header. For backward compatibility you can also add this header whenever you add a Cache-control : no-cache header.

Pragma : no-cache

Expires header

Like the Pragma header this is also a legacy header. This header sets the time at which the cached entry should expire. Unlike the max-age directive of the Cache-control header, it sets an exact expiration date/time. When both Cache-control and Expires are used, Cache-control takes precedence. It is common practice to add both the Cache-control and Expires headers.

Expires : Sun, 24 Mar 2019 12:28:00 GMT

Implementation tips

Once you have an understanding of how caching works you have to determine how to implement it for your website. There are roughly three questions you have to answer to get to a proper implementation plan :

- What to cache?

- How long to cache?

- How to force an update to a cached item?

What to cache?

This question can actually be reversed. What not to cache? As browsers may cache any resource which does not explicitly return the cache-control : no-store header, you will first have to look for resources you really don't want to cache. Look specifically at Html pages as their content might be dynamically generated at the server. Their content might differ based on the user, time of day or geographic location of the user. You really don't want those to end up in a cache, so disable caching for these resources.

Next look for the resources which are requested most for your site. Many sites have a number of resources (like stylesheets) which are requested for almost every page.

And lastly, categorize the remaining resources of your site by type (Html, fonts, images, ...) and determine which would benefit from caching the most.

How long to cache?

This one is easy to answer : always aim at a max-age of about one year. Of course there are cases where this is not possible. If you have a resource which is sure to change every day or every release, it is useless to set a max-age higher than the change frequency of the resource. In very rare cases you will want to cache a dynamic resource for a very short time (hours of even minutes). This way you can remove some load from your servers while still maintain some of the dynamic behavior of the resource.

How to force an update to a cached item?

When you update some resource of your site and this resource is cached, any user who has a cached version of this resource will not receive your update until their cache expires. This can make releasing an update to your site very difficult. To overcome this issue the most common solution is to change the Url of the resource when you update the resource. The following examples give some hints on how to do this :

- Put the latest change date of the resource in the filename (like stylesheet20190102.css)

- Put the version number of the resource in the filename (like stylesheet-v2.34.css)

- Put the latest change date of the resource in GET parameters (like stylesheet.css?date=20190102)

- Put the version number of the resource in GET parameters (like stylesheet.css?v=2.34)

As a resource is cached based on it's Url, the new resource will be treated as a new cache item. Of course you will have to change the code of your site to use the new resource name.

Conclusion

A good implementation of Http caching can give your web application a serious performance boost. But an improper implementation can lead to serious problems when updating your site and can even introduce security risks when content meant for a single user is cached and exposed to other users. Knowing how caching works and how to tweak it can make your web application faster, more secure and even prevent sites from becoming unavailable under high loads.